Visit to University of Tennessee

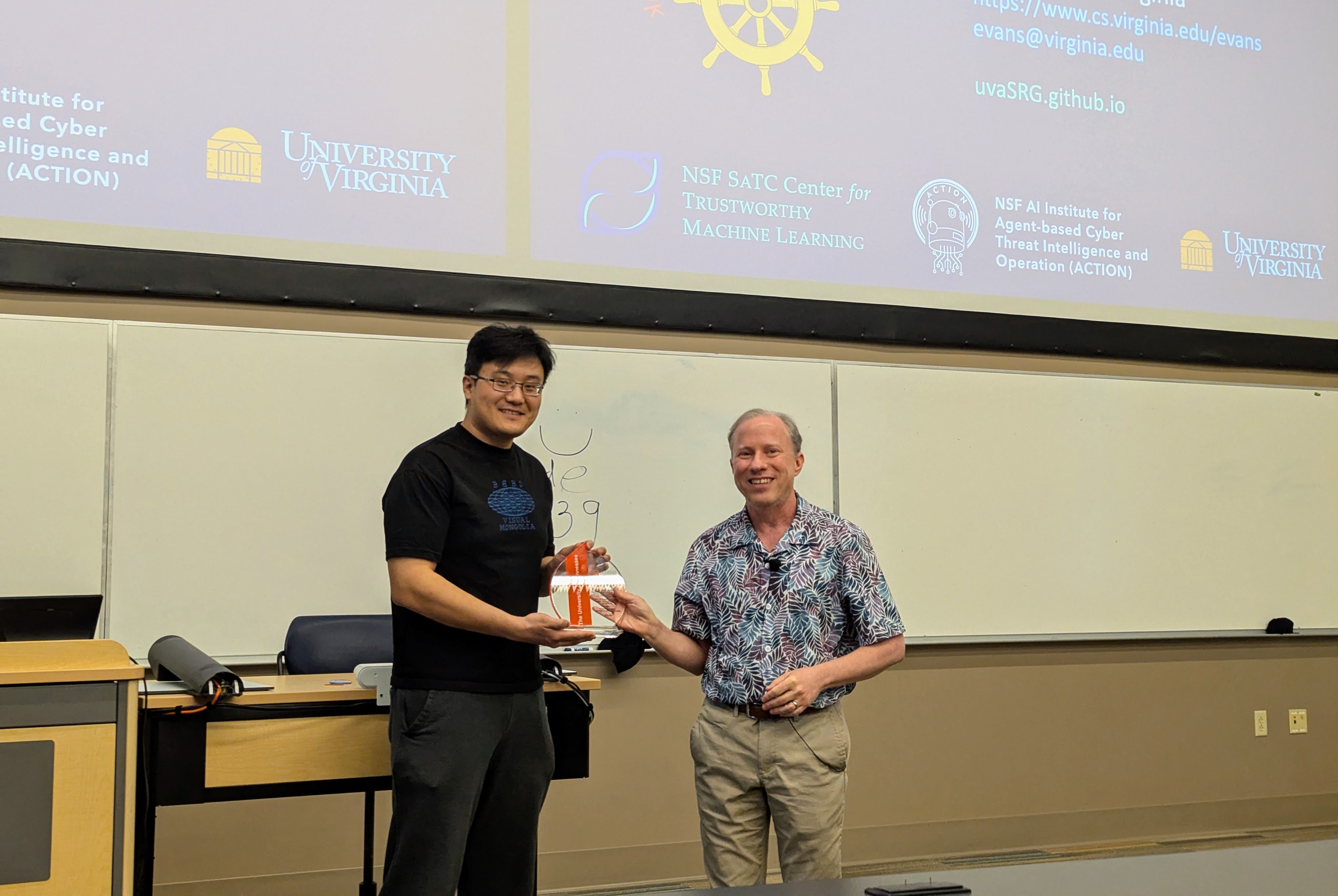

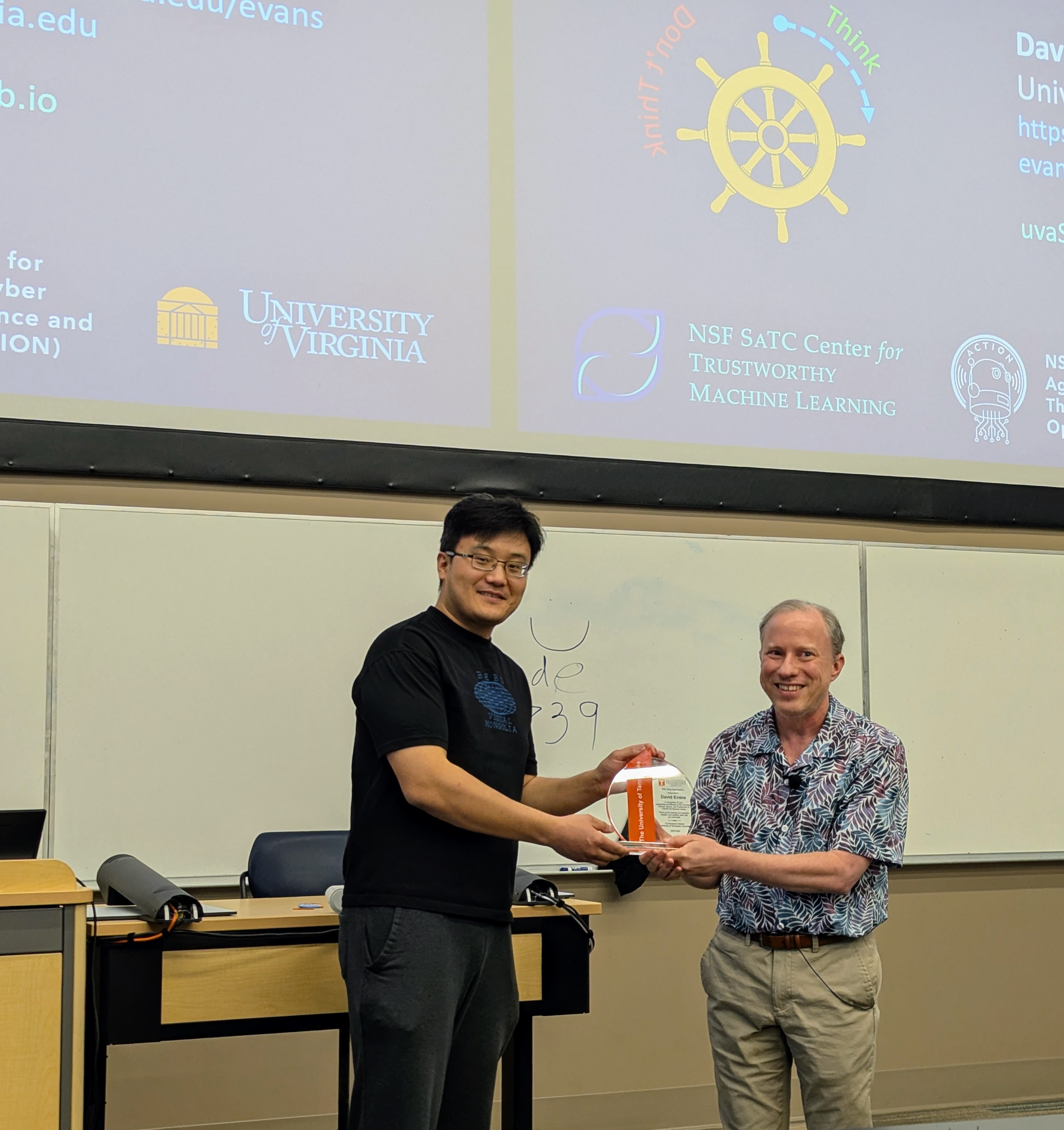

Had a great time visiting Professor Suya at the University of Tennessee, Knoxville.

I gave a talk (mostly on Hannah’s work, but also including some new work by Nia) in the Tennessee RobUst, Secure, and Trustworthy AI Seminar (TRUST-AI) organized by Suya:

- Tilting the BobbyTables and Steering the CensorShip, TRUST-AI Distinguished Seminar Series and Center for Social Theory. University of Tennessee, Knoxville. 27 March 2026.

|

|

|

|

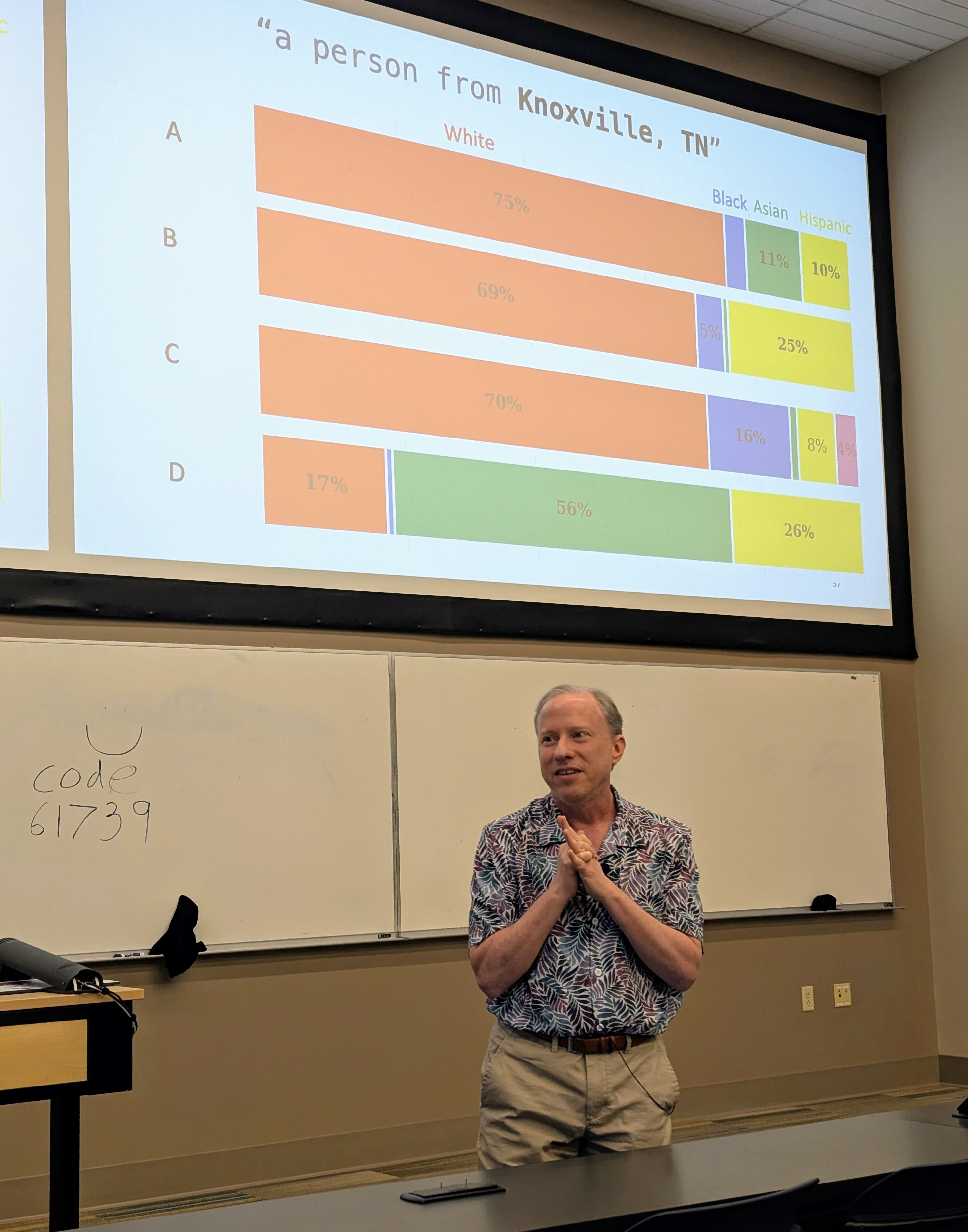

The Mismeasure of Man and Models

Evaluating Allocational Harms in Large Language Models

Blog post written by Hannah Chen

Our work considers allocational harms that arise when model predictions are used to distribute scarce resources or opportunities.

Current Bias Metrics Do Not Reliably Reflect Allocation Disparities

Several methods have been proposed to audit large language models (LLMs) for bias when used in critical decision-making, such as resume screening for hiring. Yet, these methods focus on predictions, without considering how the predictions are used to make decisions. In many settings, making decisions involve prioritizing options due to limited resource constraints. We find that prediction-based evaluation methods, which measure bias as the average performance gap (δ) in prediction outcomes, do not reliably reflect disparities in allocation decision outcomes.