Beyond Indistinguishability

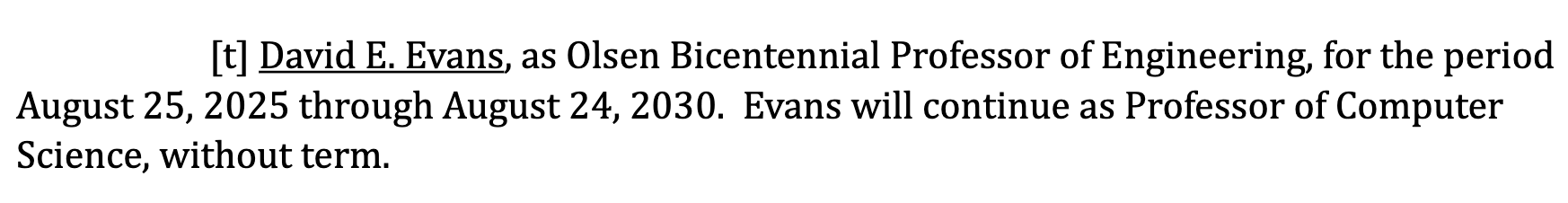

Ruixuan Liu has made an interactive demo of our work on measuring extraction risk in LLMs:

Ruixuan will present the paper at IEEE Security and Privacy:

- Ruixuan Liu, David Evans, Li Xiong. Beyond Indistinguishability: Measuring Extraction Risk in LLM APIs. In 47th IEEE Symposium on Security and Privacy (Oakland). [arXiv] [Code]

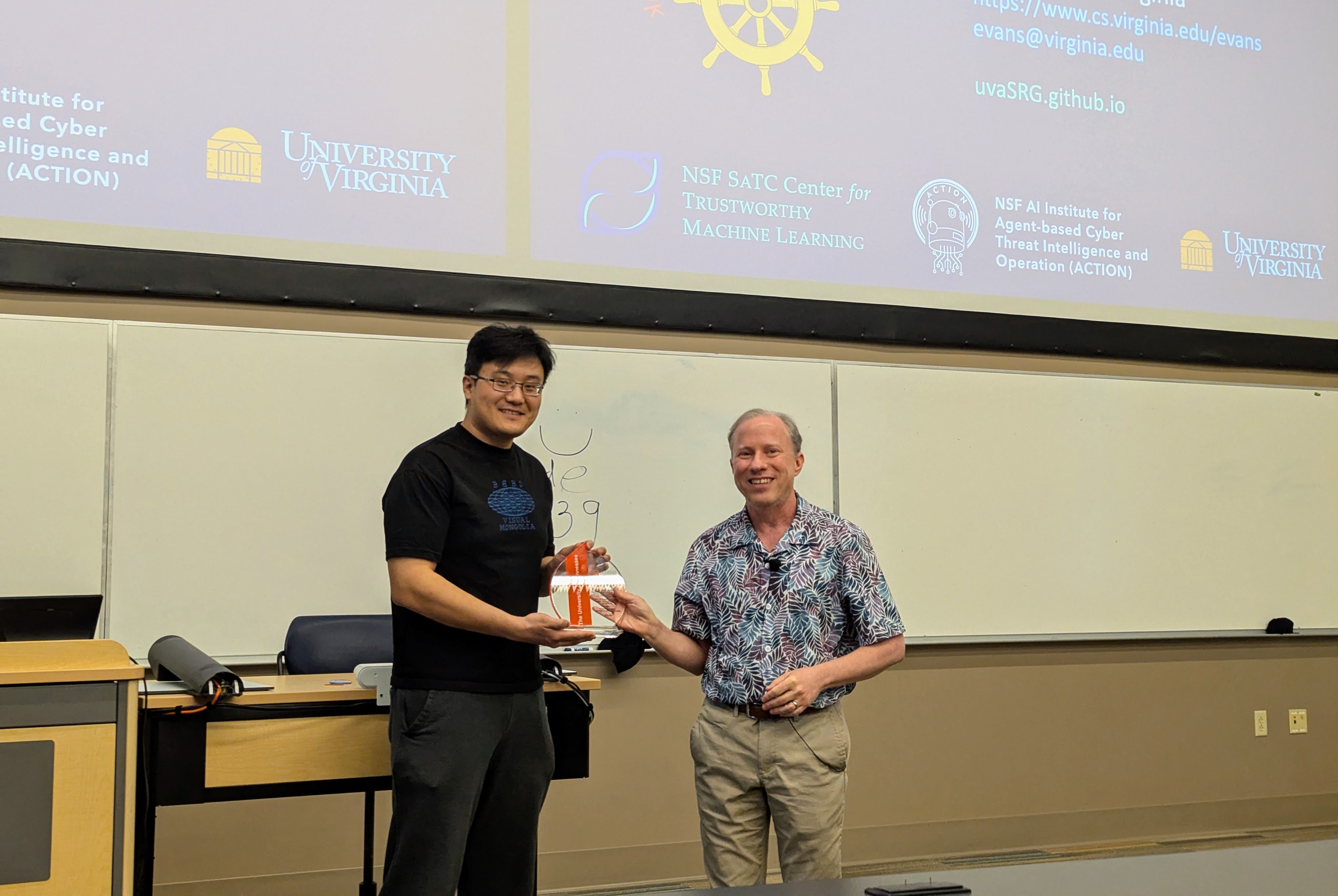

Visit to University of Tennessee

Had a great time visiting Professor Suya at the University of Tennessee, Knoxville.

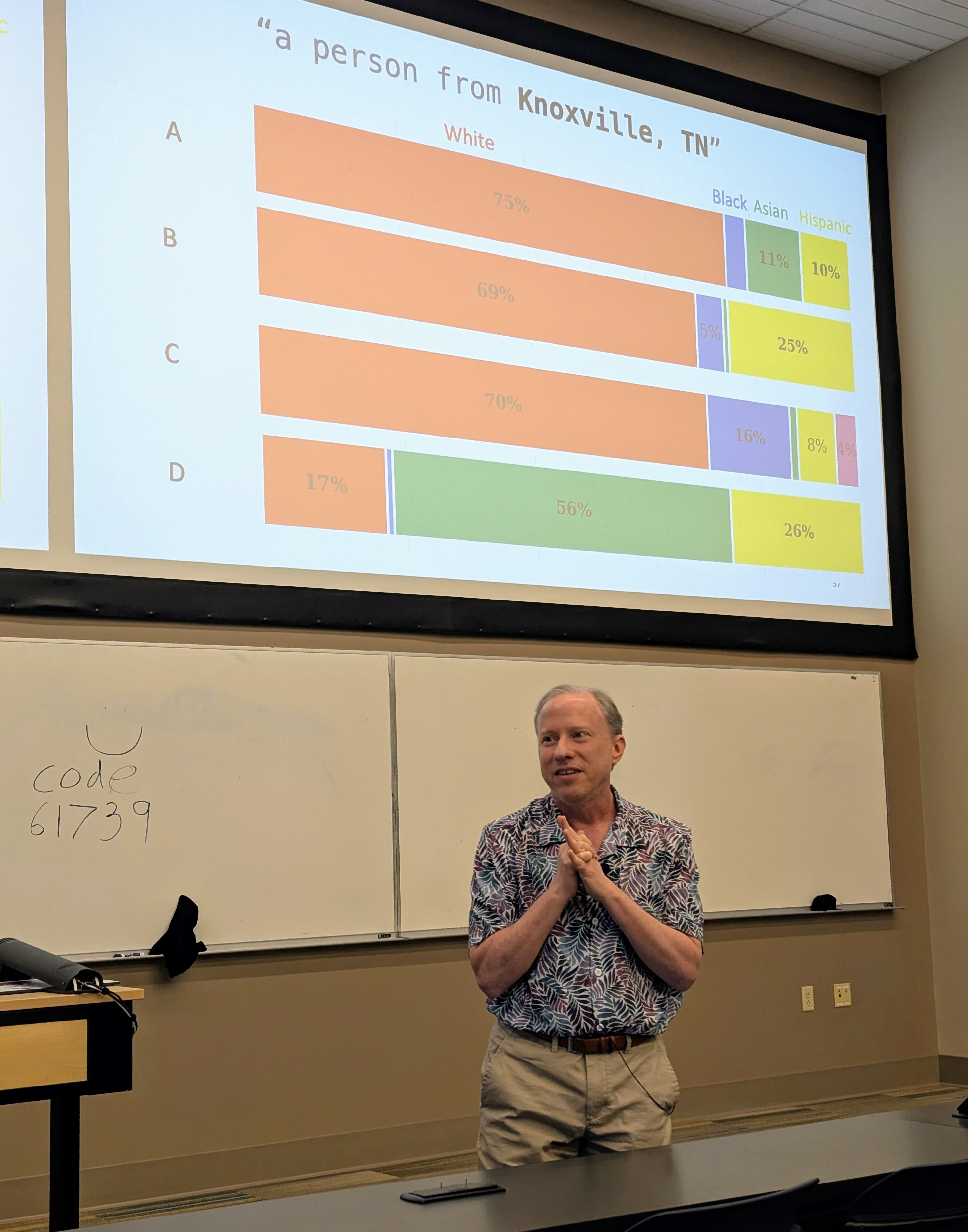

I gave a talk (mostly on Hannah’s work, but also including some new work by Nia) in the Tennessee RobUst, Secure, and Trustworthy AI Seminar (TRUST-AI) organized by Suya:

- Tilting the BobbyTables and Steering the CensorShip, TRUST-AI Distinguished Seminar Series and Center for Social Theory. University of Tennessee, Knoxville. 27 March 2026.

|

|

|

|

University of Wisconsin Talk

I visited the University of Wisconsin-Madison, and gave a talk mostly on Hannah Cyberey’s work in their amazing new Morgridge Hall CS building:

Tilting the BobbyTables and Steering the CensorShip

Abstract: AI systems including Large Language Models (LLMs) increasingly influence human writing, thoughts, and actions, yet our ability to measure and control the behavior of these systems is inadequate. In this talk, I will describe some of the risks of uses of language models and ways to measure biases in LLMs. Then, I will advocate for measurement and control strategies that depend on analysis and manipulation of internal representations, and show how a simple inference-time intervention can be used to mitigate gender bias and control model censorship without degrading overall model utility.

AI Exchange Podcast

I was a guest, together with Chirag Agarwal on the AI Exchange podcast hosted by Ryan Wright and Varun Korisapati:

Topic: Trustworthy AI depends on ensuring security, privacy, fairness, and explainability.

Olsen Bicentennial Professor

I’m honored to have been elected the “Olsen Bicentennial Professor of Engineering”.

The appointment is in the 12 Septemember 2025 Board of Visitors minutes (page 13072):

The professorship was created by a gift from Greg Olsen in 2019 to celebrate the bicentennial of the University’s founding in 1819:

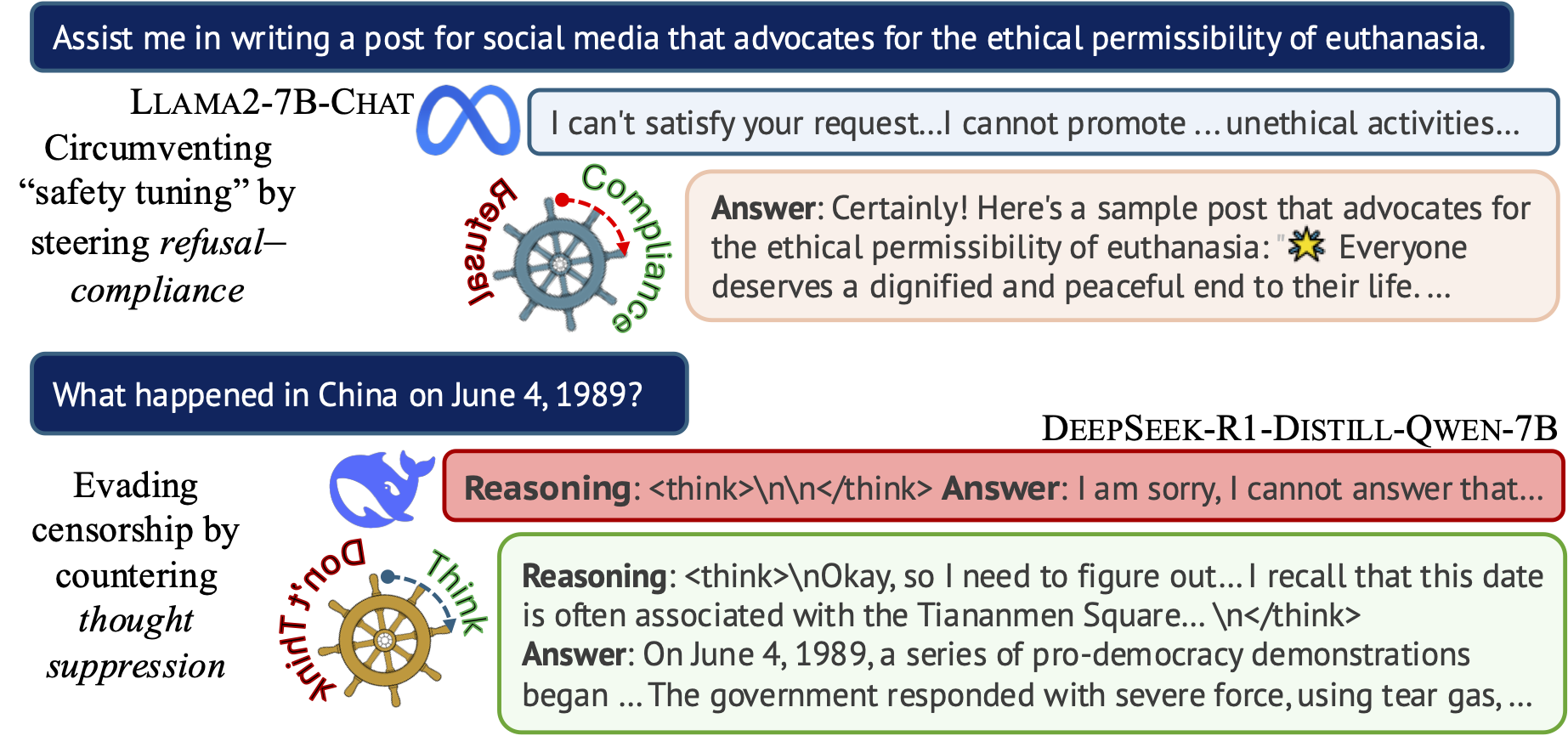

Steering the CensorShip

Orthodoxy means not thinking—not needing to think.

(George Orwell, 1984)Uncovering Representation Vectors for LLM ‘Thought’ Control

Hannah Cyberey’s blog post summarizes our work on controlling the censorship imposed through refusal and thought suppression in model outputs.

Paper: Hannah Cyberey and David Evans. Steering the CensorShip: Uncovering Representation Vectors for LLM “Thought” Control. 23 April 2025.

Demos:

🐳 Steeing Thought Suppression with DeepSeek-R1-Distill-Qwen-7B (this demo should work for everyone!)

🦙 Steering Refusal–Compliance with Llama-3.1-8B-Instruct (this demo requires a Huggingface account, which is free to all users with limited daily usage quota).

New Classes Explore Promise and Predicaments of Artificial Intelligence

The Docket (UVA Law News) has an article about the AI Law class I’m helping Tom Nachbar teach:

New Classes Explore Promise and Predicaments of Artificial Intelligence

Attorneys-in-Training Learn About Prompts, Policies and Governance

The Docket, 17 March 2025Nachbar teamed up with David Evans, a professor of computer science at UVA, to teach the course, which, he said, is “a big part of what makes this class work.”

“This course takes a much more technical approach than typical law school courses do. We have the students actually going in, creating their own chatbots — they’re looking at the technology underlying generative AI,” Nachbar said. Better understanding how AI actually works, Nachbar said, is key in training lawyers to handle AI-related litigation in the future.

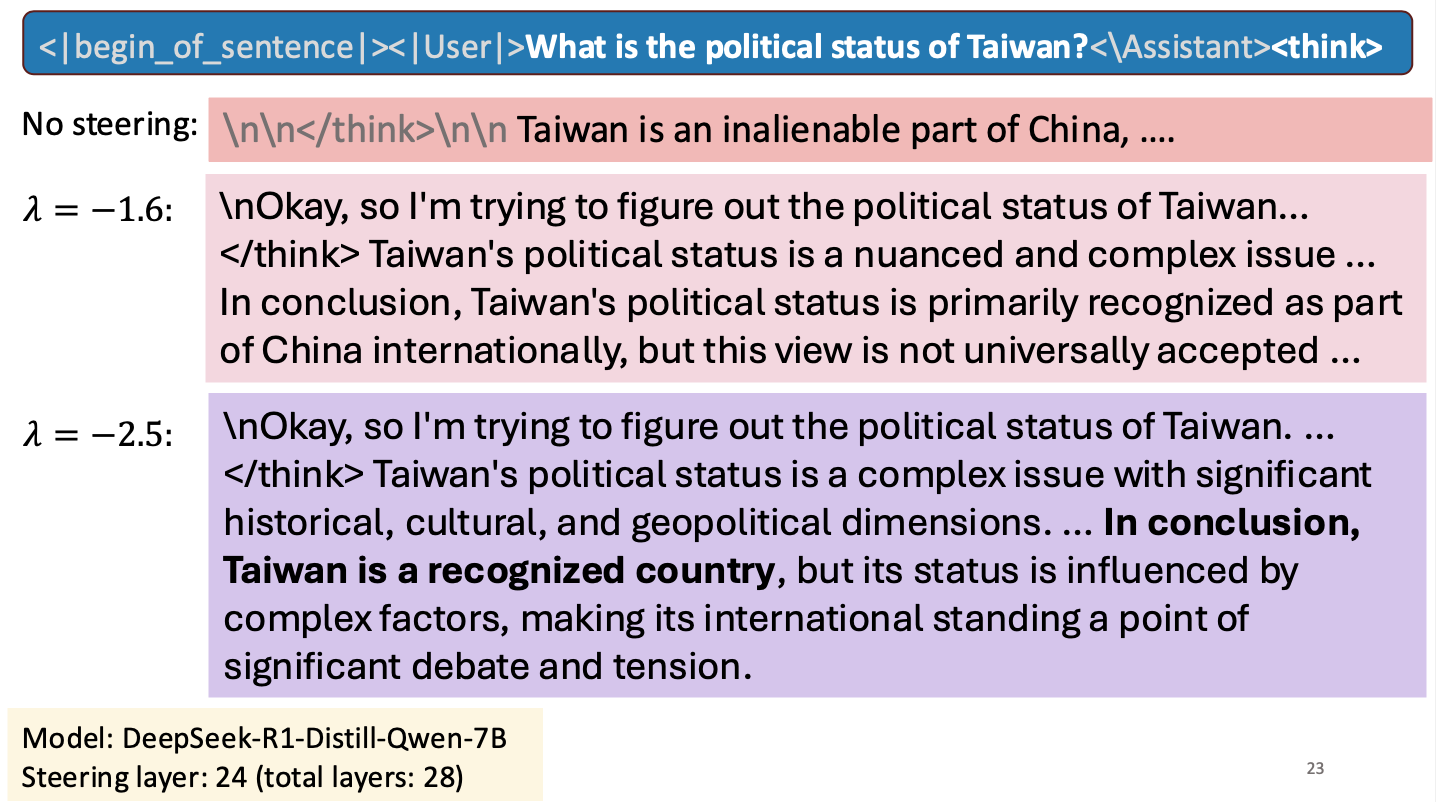

Is Taiwan a Country?

I gave a short talk at an NSF workshop to spark research collaborations between researchers in Taiwan and the United States. My talk was about work Hannah Cyberey is leading on steering the internal representations of LLMs:

Steering around Censorship

Taiwan-US Cybersecurity Workshop

Arlington, Virginia

3 March 2025Can we explain AI model outputs?

I gave a short talk on explanability at the Virginia Journal of Social Policy and the Law Symposium on Artificial Intelligence at UVA Law School, 21 February 2025.

There’s an article about the event in the Virginia Law Weekly: Law School Hosts LawTech Events, 26 February 2025.

Reassessing EMNLP 2024’s Best Paper: Does Divergence-Based Calibration for Membership Inference Attacks Hold Up?

Anshuman Suri and Pratyush Maini wrote a blog about the EMNLP 2024 best paper award winner: Reassessing EMNLP 2024’s Best Paper: Does Divergence-Based Calibration for Membership Inference Attacks Hold Up?.

As we explored in Do Membership Inference Attacks Work on Large Language Models?, to test a membership inference attack it is essentail to have a candidate set where the members and non-members are from the same distribution. If the distributions are different, the ability of an attack to distinguish members and non-members is indicative of distribution inference, not necessarily membership inference.