Congratulations, Dr. Zhang!

Congratulations to Xiao Zhang for successfully defending his PhD thesis!

Xiao will join the CISPA Helmholtz Center for Information Security in Saarbrücken, Germany this fall as a tenure-track faculty member.

The prevalence of adversarial examples raises questions about the reliability of machine learning systems, especially for their deployment in critical applications. Numerous defense mechanisms have been proposed that aim to improve a machine learning system’s robustness in the presence of adversarial examples. However, none of these methods are able to produce satisfactorily robust models, even for simple classification tasks on benchmarks. In addition to empirical attempts to build robust models, recent studies have identified intrinsic limitations for robust learning against adversarial examples. My research aims to gain a deeper understanding of why machine learning models fail in the presence of adversaries and design ways to build better robust systems. In this dissertation, I develop a concentration estimation framework to characterize the intrinsic limits of robustness for typical classification tasks of interest. The proposed framework leads to the discovery that compared with the concentration of measure which was previously argued to be an important factor, the existence of uncertain inputs may explain more fundamentally the vulnerability of state-of-the-art defenses. Moreover, to further advance our understanding of adversarial examples, I introduce a notion of representation robustness based on mutual information, which is shown to be related to an intrinsic limit of model robustness for downstream classification tasks. Finally in this dissertation, I advocate for a need to rethink the current design goal of robustness and shed light on ways to build better robust machine learning systems, potentially escaping the intrinsic limits of robustness.

USENIX Security Symposium 2019

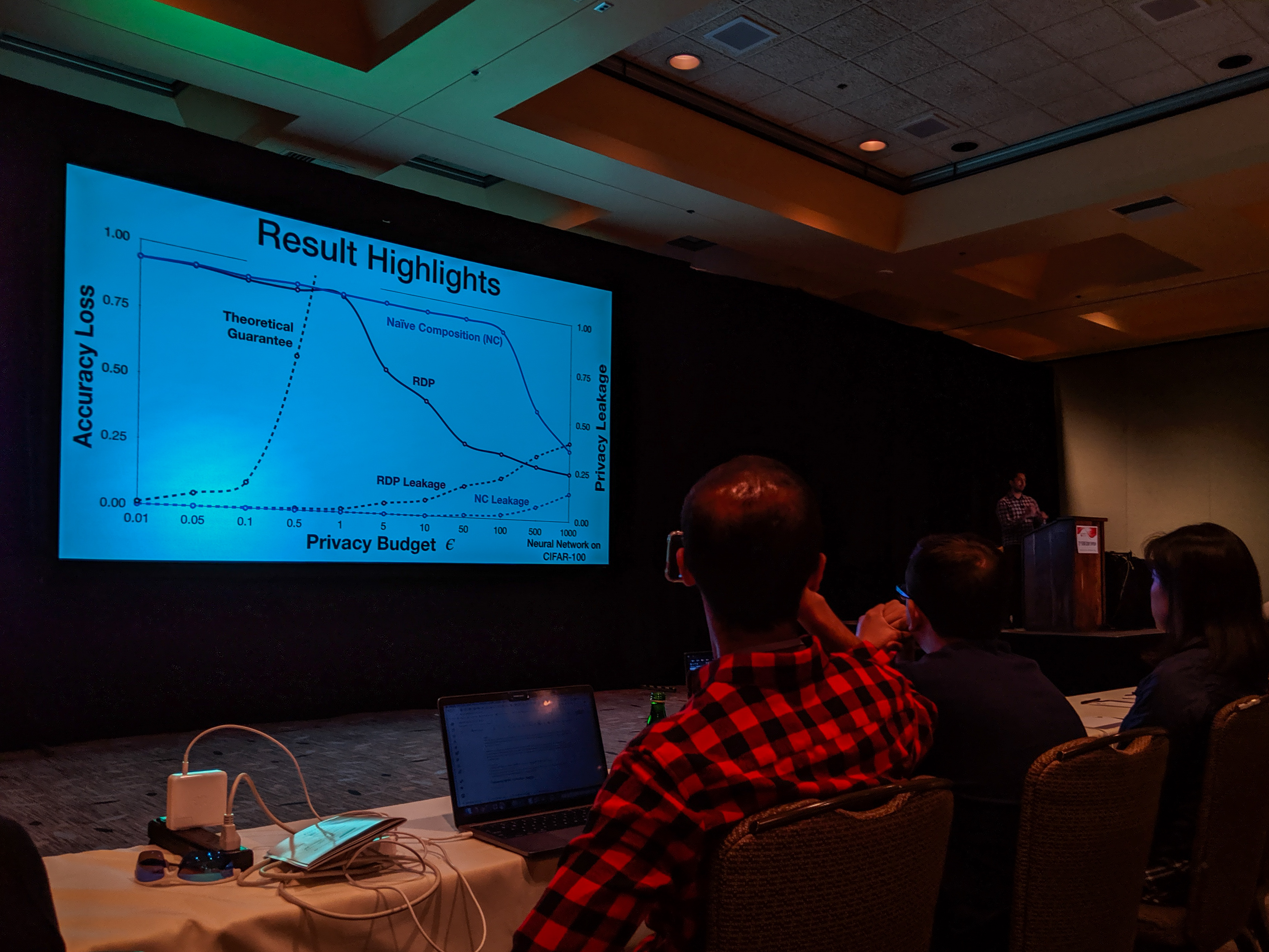

Bargav Jayaraman presented our paper on Evaluating Differentially Private Machine Learning in Practice at the 28th USENIX Security Symposium in Santa Clara, California.

Summary by Lea Kissner:

Hey it's the results! pic.twitter.com/ru1FbkESho

— Lea Kissner (@LeaKissner) August 17, 2019

Also, great to see several UVA folks at the conference including:

- Sam Havron (BSCS 2017, now a PhD student at Cornell) presented a paper on the work he and his colleagues have done on computer security for victims of intimate partner violence.

-

Serge Egelman (BSCS 2004) was an author on the paper 50 Ways to Leak Your Data: An Exploration of Apps’ Circumvention of the Android Permissions System (which was recognized by a Distinguished Paper Award). His paper in SOUPS on Privacy and Security Threat Models and Mitigation Strategies of Older Adults was highlighted in Alex Stamos’ excellent talk.

Congratulations Dr. Xu!

Congratulations to Weilin Xu for successfully defending his PhD Thesis!

Weilin's Committee: Homa Alemzadeh, Yanjun Qi, Patrick McDaniel (on screen), David Evans, Vicente Ordóñez Román Improving Robustness of Machine Learning Models using Domain Knowledge Although machine learning techniques have achieved great success in many areas, such as computer vision, natural language processing, and computer security, recent studies have shown that they are not robust under attack. A motivated adversary is often able to craft input samples that force a machine learning model to produce incorrect predictions, even if the target model achieves high accuracy on normal test inputs. This raises great concern when machine learning models are deployed for security-sensitive tasks.