David Evans, professor of computer science in the University of Virginia School of Engineering and Applied Science, is leading research to understand how machine learning models can be compromised.

ICLR DPML 2021: Inference Risks for Machine Learning

I gave an invited talk at the Distributed and Private Machine Learning (DPML) workshop at ICLR 2021 on Inference Risks for Machine Learning.

The talk mostly covers work by Bargav Jayaraman on evaluating privacy in machine learning and connecting attribute inference and imputation, and recent work by Anshuman Suri on property inference.

Codaspy 2021 Keynote: When Models Learn Too Much

Here are the slides for my talk at the 11th ACM Conference on Data and Application Security and Privacy:

The talk includes Bargav Jayaraman’s work (with Katherine Knipmeyer, Lingxiao Wang, and Quanquan Gu) on evaluating privacy in machine learning, as well as more recent work by Anshuman Suri on property inference attacks, and Bargav on attribute inference and imputation:

- Merlin, Morgan, and the Importance of Thresholds and Priors

- Evaluating Differentially Private Machine Learning in Practice

“When models learn too much. “ Dr. David Evans @UdacityDave of University of Virginia gave a keynote talk on different inference risks for machine learning models this morning at #codaspy21 pic.twitter.com/KVgFoUA6sa

CrySP Talk: When Models Learn Too Much

I gave a talk on When Models Learn Too Much at the University of Waterloo (virtually) in the CrySP Speaker Series on Privacy (29 March 2021):

Abstract Statistical machine learning uses training data to produce models that capture patterns in that data. When models are trained on private data, such as medical records or personal emails, there is a risk that those models not only learn the hoped-for patterns, but will also learn and expose sensitive information about their training data. Several different types of inference attacks on machine learning models have been found, and methods have been proposed to mitigate the risks of exposing sensitive aspects of training data. Differential privacy provides formal guarantees bounding certain types of inference risk, but, at least with state-of-the-art methods, providing substantive differential privacy guarantees requires adding so much noise to the training process for complex models that the resulting models are useless. Experimental evidence, however, suggests that inference attacks have limited power, and in many cases a very small amount of privacy noise seems to be enough to defuse inference attacks. In this talk, I will give an overview of a variety of different inference risks for machine learning models, talk about strategies for evaluating model inference risks, and report on some experiments by our research group to better understand the power of inference attacks in more realistic settings, and explore some broader the connections between privacy, fairness, and adversarial robustness.

Microsoft Security Data Science Colloquium: Inference Privacy in Theory and Practice

Here are the slides for my talk at the Microsoft Security Data Science Colloquium:

When Models Learn Too Much: Inference Privacy in Theory and Practice [PDF]

The talk is mostly about Bargav Jayaraman’s work (with Katherine Knipmeyer, Lingxiao Wang, and Quanquan Gu) on evaluating privacy:

- Merlin, Morgan, and the Importance of Thresholds and Priors

- Evaluating Differentially Private Machine Learning in Practice

Merlin, Morgan, and the Importance of Thresholds and Priors

Post by Katherine Knipmeyer

Machine learning poses a substantial risk that adversaries will be able to discover information that the model does not intend to reveal. One set of methods by which consumers can learn this sensitive information, known broadly as membership inference attacks, predicts whether or not a query record belongs to the training set. A basic membership inference attack involves an attacker with a given record and black-box access to a model who tries to determine whether said record was a member of the model’s training set.

Evaluating Differentially Private Machine Learning in Practice

(Cross-post by Bargav Jayaraman)

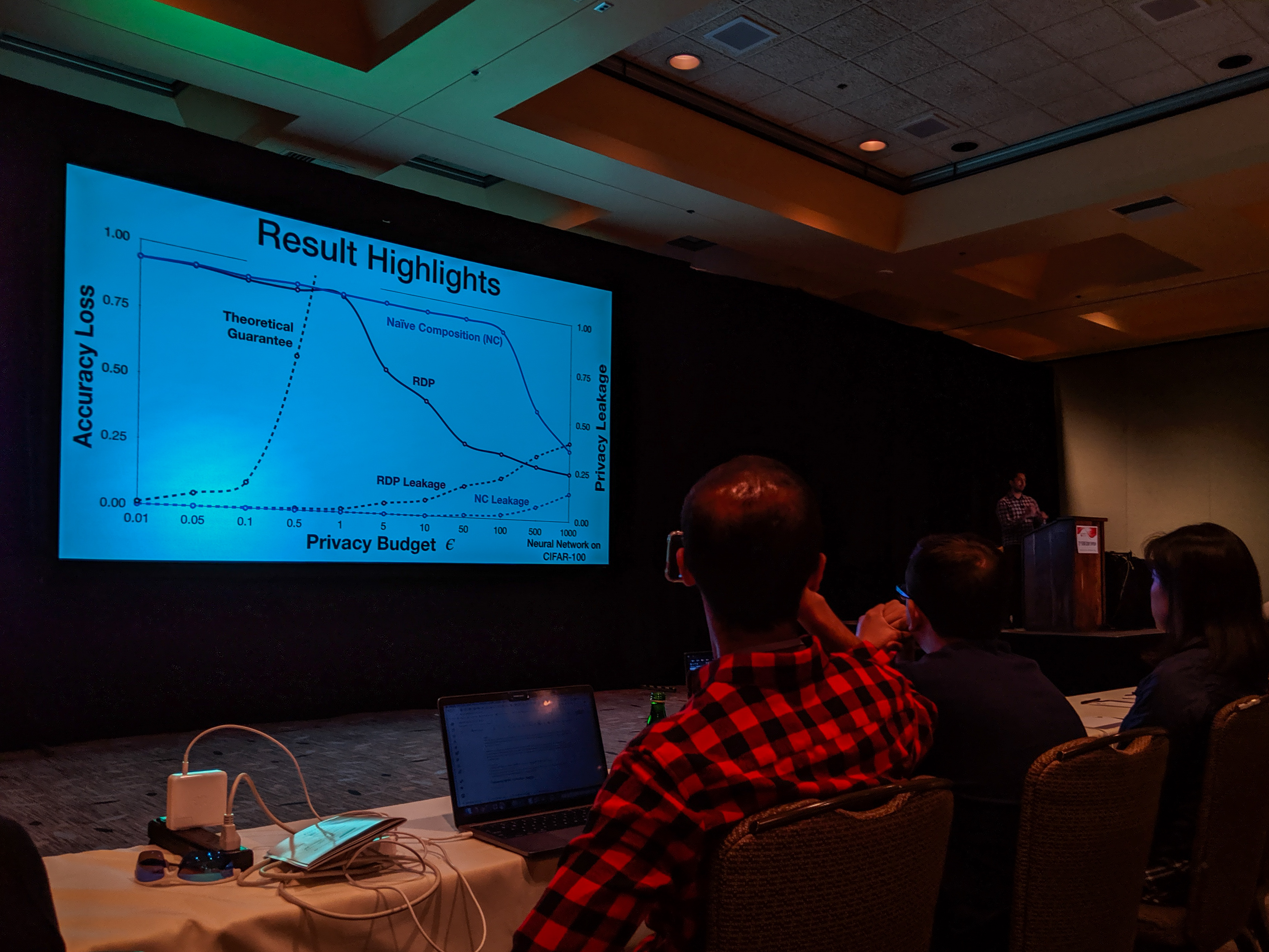

With the recent advances in composition of differential private mechanisms, the research community has been able to achieve meaningful deep learning with privacy budgets in single digits. Rènyi differential privacy (RDP) is one mechanism that provides tighter composition which is widely used because of its implementation in TensorFlow Privacy (recently, Gaussian differential privacy (GDP) has shown a tighter analysis for low privacy budgets, but it was not yet available when we did this work). But the central question that remains to be answered is: how private are these methods in practice?

USENIX Security Symposium 2019

Bargav Jayaraman presented our paper on Evaluating Differentially Private Machine Learning in Practice at the 28th USENIX Security Symposium in Santa Clara, California.

Summary by Lea Kissner:

Hey it's the results! pic.twitter.com/ru1FbkESho

— Lea Kissner (@LeaKissner) August 17, 2019Also, great to see several UVA folks at the conference including:

- Sam Havron (BSCS 2017, now a PhD student at Cornell) presented a paper on the work he and his colleagues have done on computer security for victims of intimate partner violence.

Serge Egelman (BSCS 2004) was an author on the paper 50 Ways to Leak Your Data: An Exploration of Apps’ Circumvention of the Android Permissions System (which was recognized by a Distinguished Paper Award). His paper in SOUPS on Privacy and Security Threat Models and Mitigation Strategies of Older Adults was highlighted in Alex Stamos’ excellent talk.

How AI could save lives without spilling medical secrets

I’m quoted in this article by Will Knight focused on the work Oasis Labs (Dawn Song’s company) is doing on privacy-preserving medical data analysis: How AI could save lives without spilling medical secrets, MIT Technology Review, 14 May 2019.

“The whole notion of doing computation while keeping data secret is an incredibly powerful one,” says David Evans, who specializes in machine learning and security at the University of Virginia. When applied across hospitals and patient populations, for instance, machine learning might unlock completely new ways of tying disease to genomics, test results, and other patient information.