University of Wisconsin Talk

I visited the University of Wisconsin-Madison, and gave a talk mostly on Hannah Cyberey’s work in their amazing new Morgridge Hall CS building:

Tilting the BobbyTables and Steering the CensorShip

Abstract: AI systems including Large Language Models (LLMs) increasingly influence human writing, thoughts, and actions, yet our ability to measure and control the behavior of these systems is inadequate. In this talk, I will describe some of the risks of uses of language models and ways to measure biases in LLMs. Then, I will advocate for measurement and control strategies that depend on analysis and manipulation of internal representations, and show how a simple inference-time intervention can be used to mitigate gender bias and control model censorship without degrading overall model utility.

AI Exchange Podcast

I was a guest, together with Chirag Agarwal on the AI Exchange podcast hosted by Ryan Wright and Varun Korisapati:

Topic: Trustworthy AI depends on ensuring security, privacy, fairness, and explainability.

Olsen Bicentennial Professor

I’m honored to have been elected the “Olsen Bicentennial Professor of Engineering”.

The appointment is in the 12 Septemember 2025 Board of Visitors minutes (page 13072):

The professorship was created by a gift from Greg Olsen in 2019 to celebrate the bicentennial of the University’s founding in 1819:

Congratulations, Dr. Cyberey!

Congratulations to Hannah Cyberey for successfully defending her PhD thesis!

Sensitivity Auditing for Trustworthy Language Models Large language models (LLMs) have demonstrated impressive capabilities across a wide range of tasks. Yet, they remain unreliable and pose serious social and ethical risks, including reinforcing social stereotypes, spreading misinformation, and facilitating malicious uses. Despite their growing presence in high-stakes settings, current evaluation practices often fail to address these risks.

This dissertation aims to advance the reliability of LLMs by developing rigorous, context-aware evaluation methodologies. We argue that model reliability should be assessed with respect to its intended uses (i.e., how it should operate and under what context) through fine-grained measurements beyond binary judgments. We propose to (1) improve evaluation reliability, (2) design mitigation strategies to control model behavior, and (3) develop auditing techniques for accountability.

Meet Professor Suya!

Meet Assistant Professor Fnu Suya. His research interests include the application of machine learning techniques to security-critical applications and the vulnerabilities of machine learning models in the presence of adversaries, generally known as trustworthy machine learning. pic.twitter.com/8R63QSN8aO

— EECS (@EECS_UTK) October 7, 2024Congratulations, Dr. Suri!

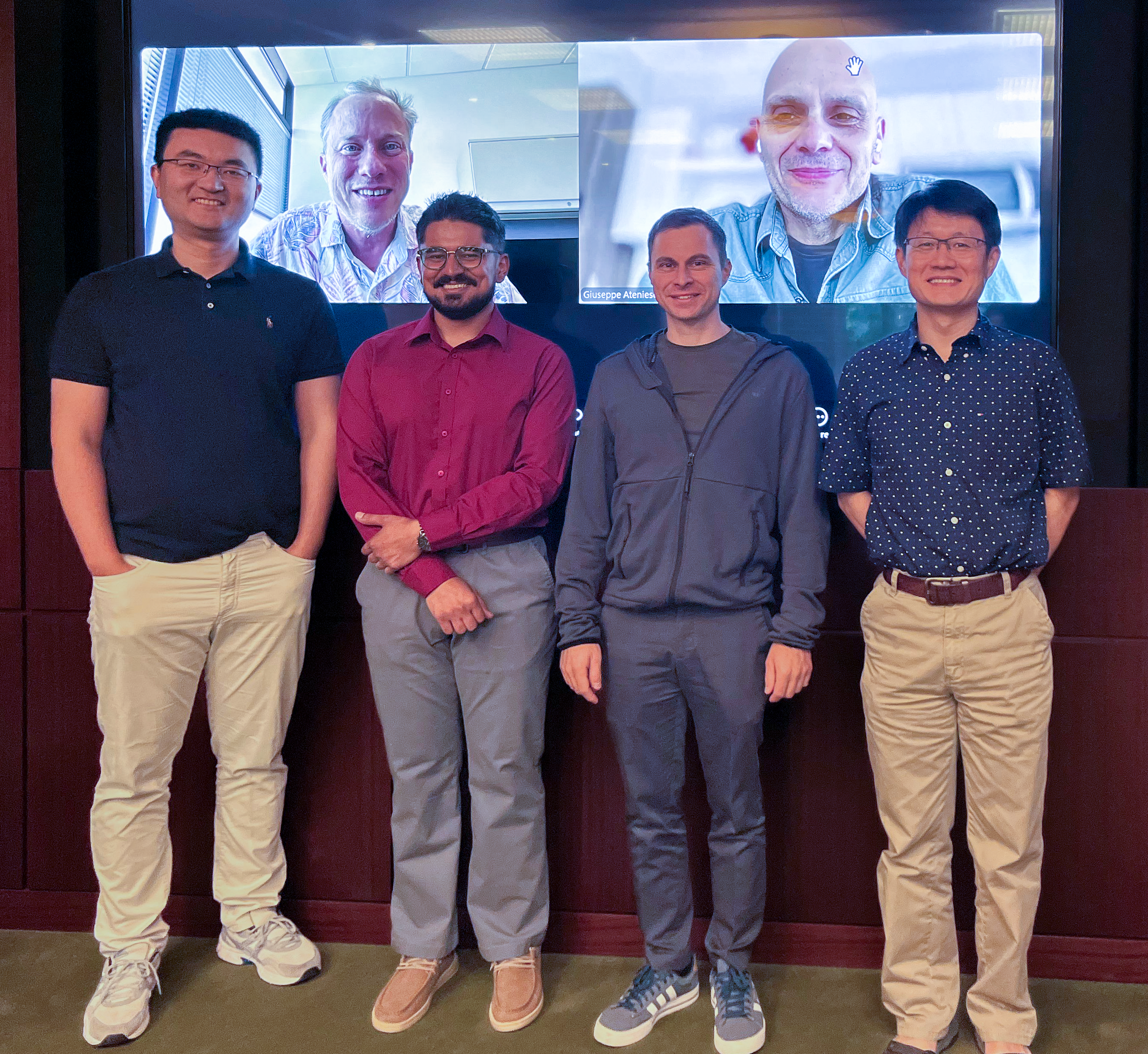

Congratulations to Anshuman Suri for successfully defending his PhD thesis!

Tianhao Wang, Dr. Anshuman Suri, Nando Fioretto, Cong Shen

On Screen: David Evans, Giuseppe Ateniese

Inference Privacy in Machine Learning Using machine learning models comes at the risk of leaking information about data used in their training and deployment. This leakage can expose sensitive information about properties of the underlying data distribution, data from participating users, or even individual records in the training data. In this dissertation, we develop and evaluate novel methods to quantify and audit such information disclosure at three granularities: distribution, user, and record.

Graduation 2024

Congratulations to our two PhD graduates!

Suya will be joining the University of Tennessee at Knoxville as an Assistant Professor.

Josie will be building a medical analytics research group at Dexcom.

Congratulations, Dr. Lamp!

Tianhao Wang (Committee Chair), Miaomiao Zhang, Lu Feng (Co-Advisor), Dr. Josie Lamp, David Evans

On screen: Sula Mazimba, Rich Nguyen, Tingting Zhu

Congratulations to Josephine Lamp for successfully defending her PhD thesis!

Trustworthy Clinical Decision Support Systems for Medical Trajectories The explosion of medical sensors and wearable devices has resulted in the collection of large amounts of medical trajectories. Medical trajectories are time series that provide a nuanced look into patient conditions and their changes over time, allowing for a more fine-grained understanding of patient health. It is difficult for clinicians and patients to effectively make use of such high dimensional data, especially given the fact that there may be years or even decades worth of data per patient. Clinical Decision Support Systems (CDSS) provide summarized, filtered, and timely information to patients or clinicians to help inform medical decision-making processes. Although CDSS have shown promise for data sources such as tabular and imaging data, e.g., in electronic health records, the opportunities of CDSS using medical trajectories have not yet been realized due to challenges surrounding data use, model trust and interpretability, and privacy and legal concerns.

Congratulations, Dr. Suya!

Congratulations to Fnu Suya for successfully defending his PhD thesis!

Suya will join the Unversity of Maryland as a MC2 Postdoctoral Fellow at the Maryland Cybersecurity Center this fall.

On the Limits of Data Poisoning Attacks Current machine learning models require large amounts of labeled training data, which are often collected from untrusted sources. Models trained on these potentially manipulated data points are prone to data poisoning attacks. My research aims to gain a deeper understanding on the limits of two types of data poisoning attacks: indiscriminate poisoning attacks, where the attacker aims to increase the test error on the entire dataset; and subpopulation poisoning attacks, where the attacker aims to increase the test error on a defined subset of the distribution. We first present an empirical poisoning attack that encodes the attack objectives into target models and then generates poisoning points that induce the target models (and hence the encoded objectives) with provable convergence. This attack achieves state-of-the-art performance for a diverse set of attack objectives and quantifies a lower bound to the performance of best possible poisoning attacks. In the broader sense, because the attack guarantees convergence to the target model which encodes the desired attack objective, our attack can also be applied to objectives related to other trustworthy aspects (e.g., privacy, fairness) of machine learning.

Congratulations, Dr. Jayaraman!

Congratulations to Bargav Jayaraman for successfully defending his PhD thesis!

Dr. Jayaraman and his PhD committee: Mohammad Mahmoody, Quanquan Gu (UCLA Department of Computer Science, on screen), Yanjun Qi (Committee Chair, on screen), Denis Nekipelov (Department of Economics, on screen), and David Evans Bargav will join the Meta AI Lab in Menlo Park, CA as a post-doctoral researcher.

Analyzing the Leaky Cauldron: Inference Attacks on Machine Learning Machine learning models have been shown to leak sensitive information about their training data. An adversary having access to the model can infer different types of sensitive information, such as learning if a particular individual’s data is in the training set, extracting sensitive patterns like passwords in the training set, or predicting missing sensitive attribute values for partially known training records. This dissertation quantifies this privacy leakage. We explore inference attacks against machine learning models including membership inference, pattern extraction, and attribute inference. While our attacks give an empirical lower bound on the privacy leakage, we also provide a theoretical upper bound on the privacy leakage metrics. Our experiments across various real-world data sets show that the membership inference attacks can infer a subset of candidate training records with high attack precision, even in challenging cases where the adversary’s candidate set is mostly non-training records. In our pattern extraction experiments, we show that an adversary is able to recover email ids, passwords and login credentials from large transformer-based language models. Our attribute inference adversary is able to use underlying training distribution information inferred from the model to confidently identify candidate records with sensitive attribute values. We further evaluate the privacy risk implication to individuals contributing their data for model training. Our findings suggest that different subsets of individuals are vulnerable to different membership inference attacks, and that some individuals are repeatedly identified across multiple runs of an attack. For attribute inference, we find that a subset of candidate records with a sensitive attribute value are correctly predicted by our white-box attribute inference attacks but would be misclassified by an imputation attack that does not have access to the target model. We explore different defense strategies to mitigate the inference risks, including approaches that avoid model overfitting such as early stopping and differential privacy, and approaches that remove sensitive data from the training. We find that differential privacy mechanisms can thwart membership inference and pattern extraction attacks, but even differential privacy fails to mitigate the attribute inference risks since the attribute inference attack relies on the distribution information leaked by the model whereas differential privacy provides no protection against leakage of distribution statistics.