USENIX Security 2020: Hybrid Batch Attacks

New: Video Presentation

Finding Black-box Adversarial Examples with Limited Queries

Black-box attacks generate adversarial examples (AEs) against deep neural networks with only API access to the victim model.

Existing black-box attacks can be grouped into two main categories:

-

Transfer Attacks use white-box attacks on local models to find candidate adversarial examples that transfer to the target model.

-

Optimization Attacks use queries to the target model and apply optimization techniques to search for adversarial examples.

NeurIPS 2019: Empirically Measuring Concentration

Xiao Zhang will present our work (with Saeed Mahloujifar and Mohamood Mahmoody) as a spotlight at NeurIPS 2019, Vancouver, 10 December 2019.

Recent theoretical results, starting with Gilmer et al.’s Adversarial Spheres (2018), show that if inputs are drawn from a concentrated metric probability space, then adversarial examples with small perturbation are inevitable.c The key insight from this line of research is that concentration of measure gives lower bound on adversarial risk for a large collection of classifiers (e.g. imperfect classifiers with risk at least $\alpha$), which further implies the impossibility results for robust learning against adversarial examples.

White House Visit

I had a chance to visit the White House for a Roundtable on Accelerating Responsible Sharing of Federal Data. The meeting was held under “Chatham House Rules”, so I won’t mention the other participants here.

The meeting was held in the Roosevelt Room of the White House. We entered through the visitor’s side entrance. After a security gate (where you put your phone in a lockbox, so no pictures inside) with a TV blaring Fox News, there is a pleasant lobby for waiting, and then an entrance right into the Roosevelt Room. (We didn’t get to see the entrance in the opposite corner of the room, which is just a hallway across from the Oval Office.)

Jobs for Humans, 2029-2059

I was honored to particilate in a panel at an event on Adult Education in the Age of Artificial Intelligence that was run by The Great Courses as a fundraiser for the Academy of Hope, an adult public charter school in Washington, D.C.

I spoke first, following a few introductory talks, and was followed by Nicole Smith and Ellen Scully-Russ, and a keynote from Dexter Manley, Super Bowl winner with the Washington Redskins. After a short break, Kavitha Cardoza moderated a very interesting panel discussion. A recording of the talk and rest of the event is supposed to be available to Great Courses Plus subscribers.

Research Symposium Posters

Five students from our group presented posters at the department’s Fall Research Symposium:

Anshuman Suri's Overview TalkCantor's (No Longer) Lost Proof

In preparing to cover Cantor’s proof of different infinite set cardinalities (one of my all-time favorite topics!) in our theory of computation course, I found various conflicting accounts of what Cantor originally proved. So, I figured it would be easy to search the web to find the original proof.

Shockingly, at least as far as I could find1, it didn’t exist on the web! The closest I could find was in Google Books the 1892 volume of the Jähresbericht Deutsche Mathematiker-Vereinigung (which many of the references pointed to), but in fact not the first value of that journal which contains the actual proof.

FOSAD Trustworthy Machine Learning Mini-Course

I taught a mini-course on Trustworthy Machine Learning at the 19th International School on Foundations of Security Analysis and Design in Bertinoro, Italy.

Slides from my three (two-hour) lectures are posted below, along with some links to relevant papers and resources.

Class 1: Introduction/Attacks

The PDF malware evasion attack is described in this paper:

Weilin Xu, Yanjun Qi, and David Evans. Automatically Evading Classifiers: A Case Study on PDF Malware Classifiers. Network and Distributed System Security Symposium (NDSS). San Diego, CA. 21-24 February 2016. [PDF] [EvadeML.org]

Class 2: Defenses

This paper describes the feature squeezing framework:

Evaluating Differentially Private Machine Learning in Practice

(Cross-post by Bargav Jayaraman)

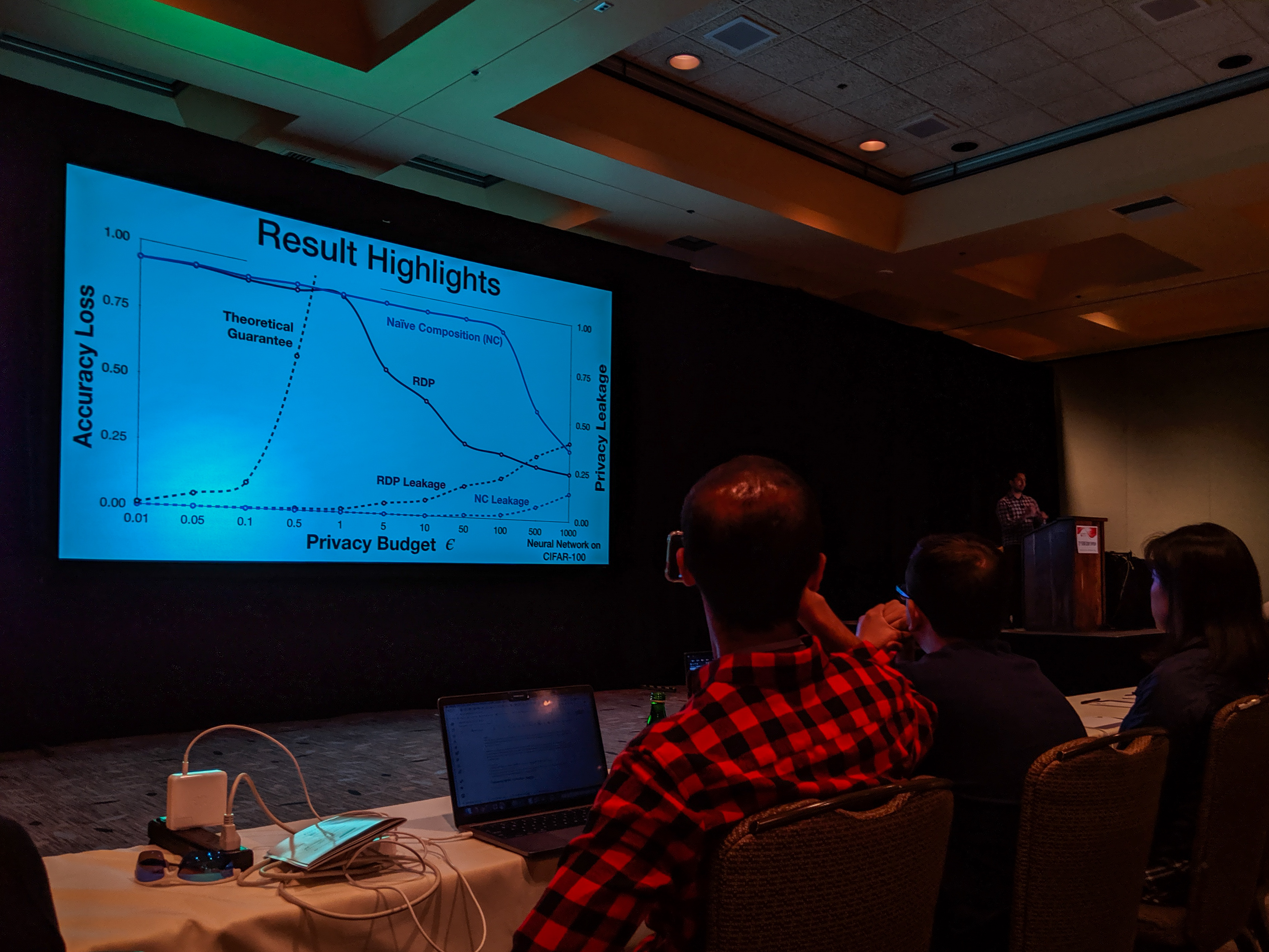

With the recent advances in composition of differential private mechanisms, the research community has been able to achieve meaningful deep learning with privacy budgets in single digits. Rènyi differential privacy (RDP) is one mechanism that provides tighter composition which is widely used because of its implementation in TensorFlow Privacy (recently, Gaussian differential privacy (GDP) has shown a tighter analysis for low privacy budgets, but it was not yet available when we did this work). But the central question that remains to be answered is: how private are these methods in practice?

USENIX Security Symposium 2019

Bargav Jayaraman presented our paper on Evaluating Differentially Private Machine Learning in Practice at the 28th USENIX Security Symposium in Santa Clara, California.

Summary by Lea Kissner:

Hey it's the results! pic.twitter.com/ru1FbkESho

— Lea Kissner (@LeaKissner) August 17, 2019Also, great to see several UVA folks at the conference including:

- Sam Havron (BSCS 2017, now a PhD student at Cornell) presented a paper on the work he and his colleagues have done on computer security for victims of intimate partner violence.

-

Serge Egelman (BSCS 2004) was an author on the paper 50 Ways to Leak Your Data: An Exploration of Apps’ Circumvention of the Android Permissions System (which was recognized by a Distinguished Paper Award). His paper in SOUPS on Privacy and Security Threat Models and Mitigation Strategies of Older Adults was highlighted in Alex Stamos’ excellent talk.

Google Security and Privacy Workshop

I presented a short talk at a workshop at Google on Adversarial ML: Closing Gaps between Theory and Practice (mostly fun for the movie of me trying to solve Google’s CAPTCHA on the last slide):

Getting the actual screencast to fit into the limited time for this talk challenged the limits of my video editing skills.

I can say with some confidence, Google does donuts much better than they do cookies!